blog

How to Manage All Your MySQL or MariaDB Databases

According to Forrester, a DBA in a large enterprise manages between 8 and 275 databases, with the industry average being 40 databases to a DBA. Larger databases usually require extra effort around tuning, backup, recovery and upgrade. Cloud, as well as automation and management tools can help improve the number of databases managed by one DBA.

With that background, we were pretty excited to introduce support for management of single-instance MySQL and MariaDB databases in ClusterControl 1.2.6. The majority, if not all of the cluster users out there, use single-instance or master-slave replicated setups along their mission-critical clusters. So today, it is possible to manage an entire MySQL/MariaDB environment from one interface.

Ok, so what about Nagios or Zabbix? Unless Nagios is deploying your instances, scaling them, recovering them if they fail, taking backups, upgrading them to newer versions, telling you about slow running queries or suboptimal configuration parameters, we’d argue that you probably ought to look into a management tool.

In today’s blog post, we’ll show you how you set that up.

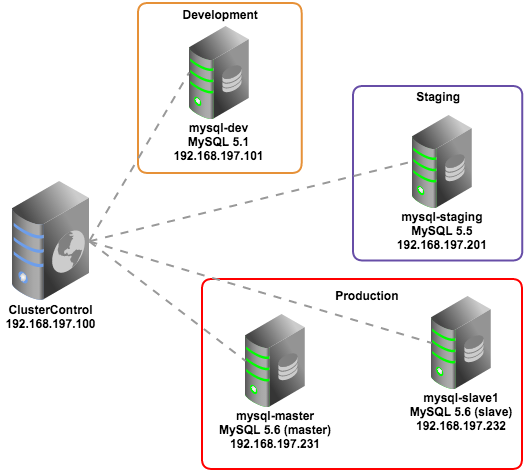

Our setup consists of MySQL servers running different versions (5.1, 5.5 and 5.6) and on different hosts:

Installing ClusterControl

Deploy ClusterControl on a dedicated host (192.168.197.100) using these simple steps:

$ wget https://severalnines.com/downloads/cmon/install-cc.sh

$ chmod u+x install-cc.sh

$ sudo ./install-cc.shThis will automate the installation of ClusterControl, but you can read more about this in the ClusterControl Quick Start Guide.

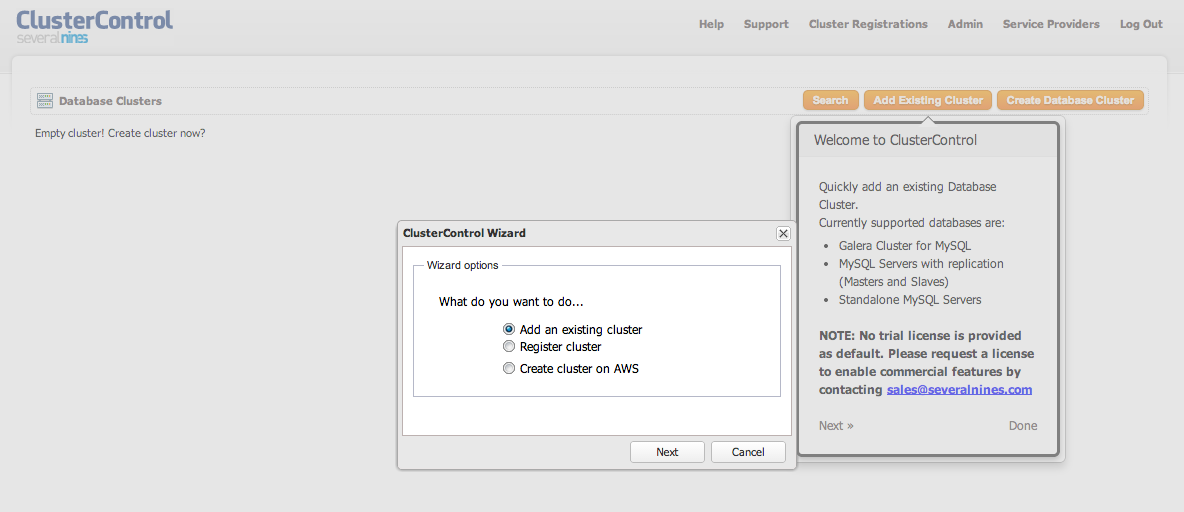

Once the installation is complete, login to the ClusterControl UI at http://192.168.197.100/clustercontrol using your email address (that you entered during the installation process) and default password ‘admin’. You should see something like below:

Preparing the Database Hosts

You do not need to install any agent on the database hosts, but ClusterControl needs to be able to SSH into them and connect to the database instance to gather statistics.

We assume your MySQL instances have been configured with a root password.

- Make sure the MySQL servers that you are going to monitor are not listening to local IP address. ClusterControl must able to access the MySQL server remotely. You can use the following command to check:

$ netstat -tulpn | grep 3306 tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN 1497/mysqld** 0.0.0.0 means MySQL is listening on all interfaces of that host.

- Generate an RSA key on ClusterControl node:

$ ssh-keygen -t rsa # Press Enter on all prompts - Configure passwordless SSH from ClusterControl node to the database nodes:

$ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.101 $ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.201 $ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.231 $ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.232 - Check that you can run the following command from the ClusterControl host:

$ ssh [user]@[IP address] "ls /root"or if you are running as sudo user:

$ ssh -t [user]@[IP address] "sudo ls /root"

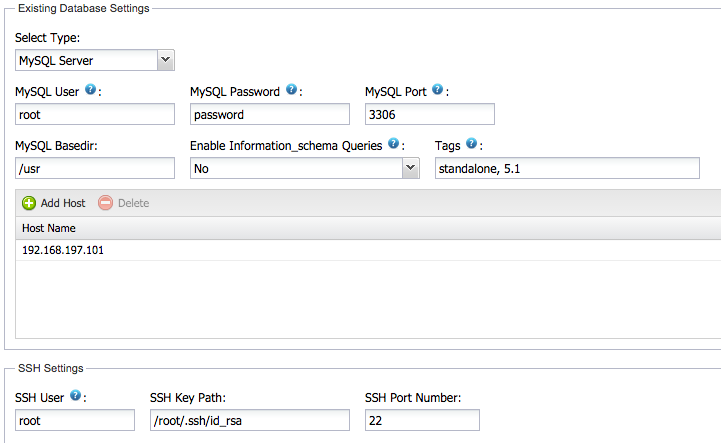

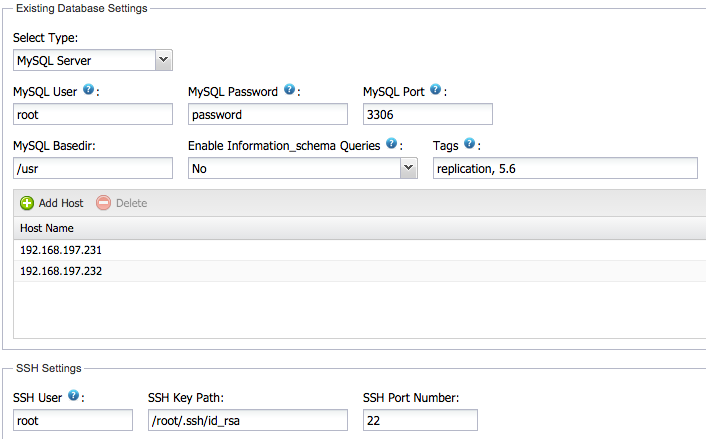

Importing MySQL Single Instances

We will now add these MySQL instances to ClusterControl. Since we are having different type of environments, we will separate them into different groups. From the UI, choose Add an Existing Cluster > MySQL Server. Specify the information required (MySQL password, etc.) and click Add Cluster, similar to screenshot below:

ClusterControl will attempt to run the following background tasks:

- Verify controller host and cmon password.

- Verify the SSH connection to database host.

- Verify the MySQL user/password.

- Verify the node status either standalone or master/slave

- Add the database node into ClusterControl provisioning list

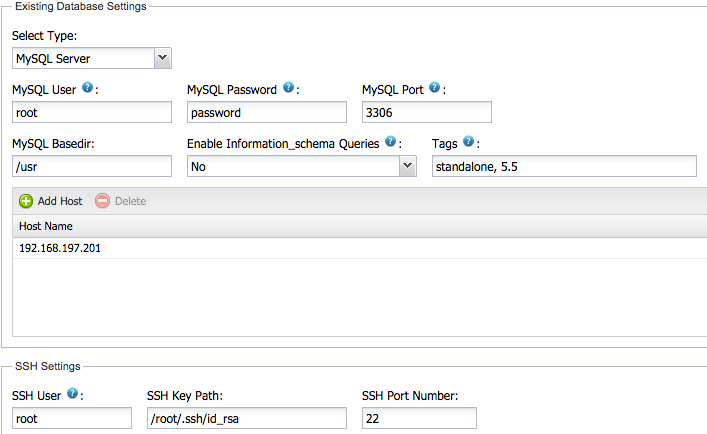

Repeat the above steps for the other nodes. For the second server (staging):

For the third environment (production), we want to group the master and the slave so we will specify both IPs in the list:

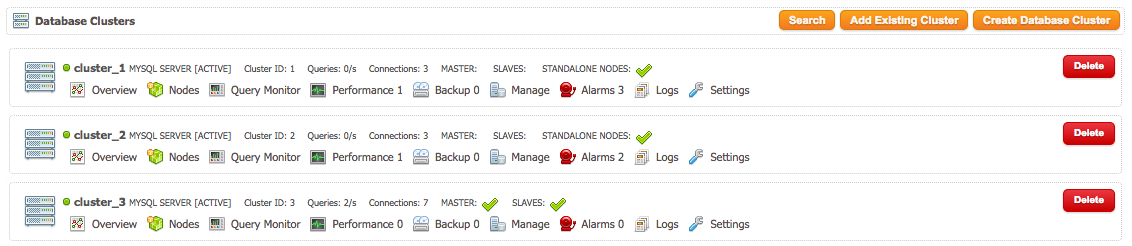

Once the MySQL instances have been imported, ClusterControl will list out three “clusters” with different Cluster ID:

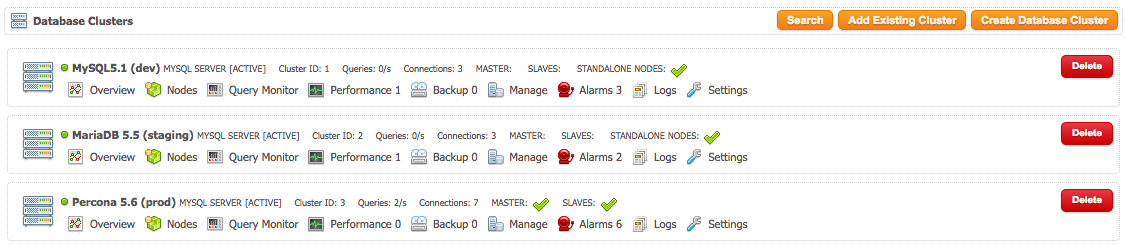

You can rename these groups:

Congratulations, you can now start managing all your instances from ClusterControl.

Notes

ClusterControl will add the specified instances with a distinct cluster or group (even though the node that you are going to import is a standalone MySQL server). For each cluster, ClusterControl assigns a cluster ID with respective CMON configuration files. If you search for cmon configuration files, you would see:

$ ls -1 /etc/cmon*

/etc/cmon.cnf

/etc/cmon.d:

cmon_1.cnf

cmon_2.cnf

cmon_3.cnfThe first cmon.cnf is a default configuration file comes during installation (install-cc.sh). This is a minimal ClusterControl configuration that needs to be run before it can import clusters. The remaining cmon_n.cnf indicates the configuration file for respective cluster ID. For each cluster, it will have its own CMON log files located under /var/log directory:

$ ls -1 /var/log/cmon*

/var/log/cmon_1.log

/var/log/cmon_2.log

/var/log/cmon_3.log

/var/log/cmon.logSo, what do you get with ClusterControl?

-

Live up-to-date information on all your databases

From the UI, you can get things like:

- How many instances do I have?

- How loaded are they?

- How healthy are they?

- How are my databases growing/shrinking?

- Will I be running out of resources, or do I need to add more RAM, CPU or disk?

- Are there performance issues?

- Are they backed up?

- Are there any slow queries that should be tuned or cached in the application layer?

- What versions are they on?

-

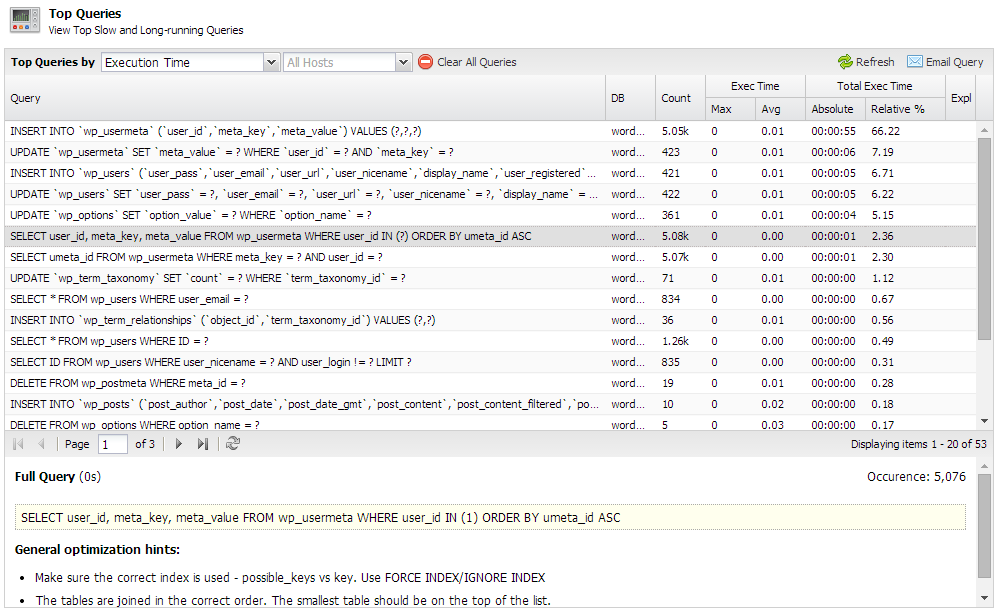

Query monitoring

The query monitor gives you a view of all your queries happening on all the servers in a group of instances. You can sort by most expensive queries, or the most common ones. To enable this, go to

ClusterControl > Settings > Query Monitor and set Query Sample Time to more than 1.

For details on this, please refer to Top Queries section under ClusterControl User Guide for MySQL.

-

Performance Advisors

The health of a MySQL server may vary over time, as schemas, application workloads or available resources might change. ClusterControl automatically examines your database configurations and performance levels, and identifies any deviations from best practice rules. This is an effective way to make sure your database is always operating at its optimal potential.

-

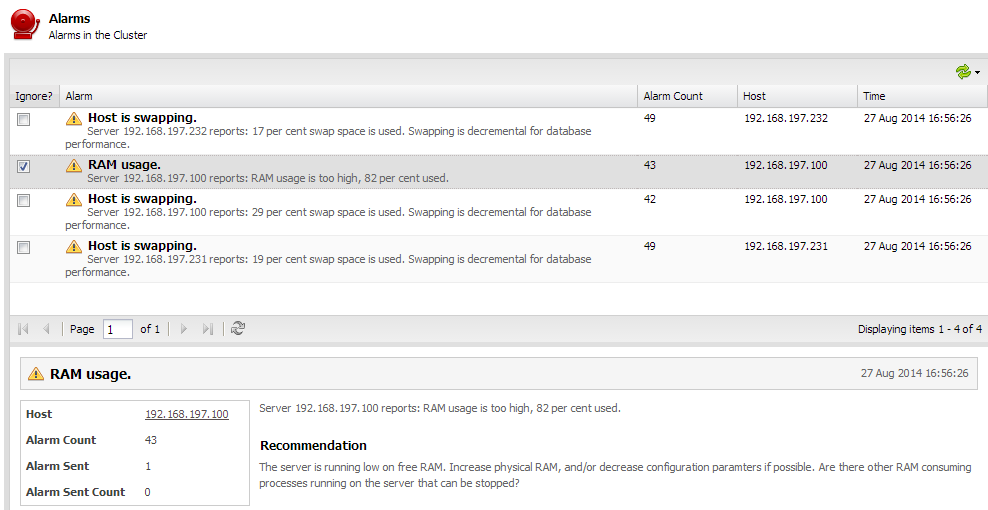

Alerts in case of problems

This allows you to get alerted in case of problems coming from your database instance, or from the host it is running on.

-

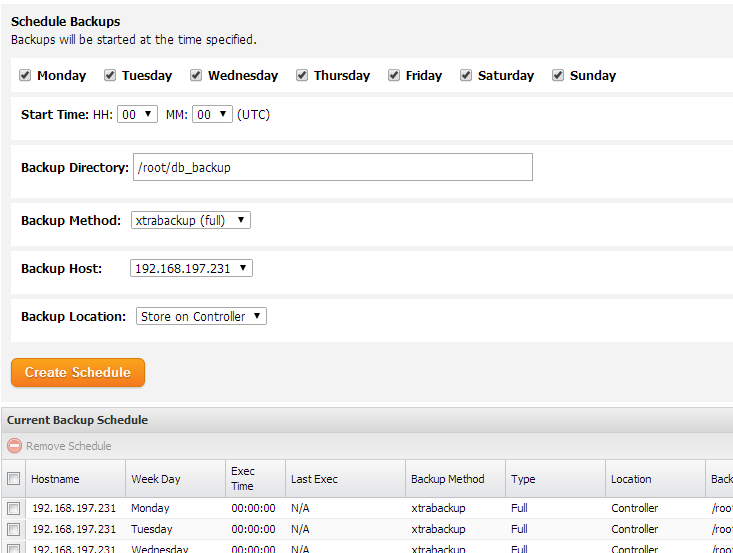

Backups

You can easily schedule full or incremental backups across your instances using XtraBackup.

There’s plenty more features to discover, e.g. deployment of instances or restart in case of failure. Give it a try, and let us know if it allows you to manage more databases.