blog

Online Schema Upgrade in MySQL Galera Cluster using TOI Method

This post examines the way DDL changes are propagated in Galera, and details the steps to upgrade the schema using the TOI method with Percona’s pt-online-schema-change.

The two methods (TOI and RSU) have both their pros and cons, and given parameters like table size, indexes, key_buffer_size, disk speed, etc., it is possible to estimate the time taken for the schema to be upgraded.

Please note that a schema change is non-transactional so it would not be possible to rollback the DDL if it fails midway. Therefore, it is always recommended to test the schema changes and ensure you have recoverable backups before performing this on your production clusters.

Schema Changes in MySQL

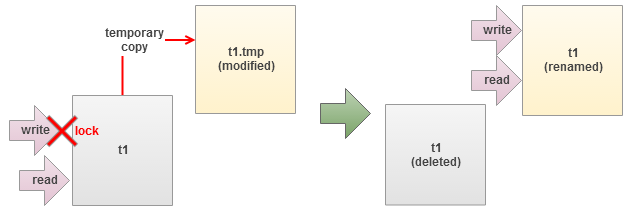

MySQL handle table altering by exporting the table data into a temporary table, changing the table structure, and then importing the data back into the table before finally rebuilding all the indexes. Thus, write blocking is the only choice to maintain data integrity. This stalls all the writes after the ALTER command has been issued, up until it completes.

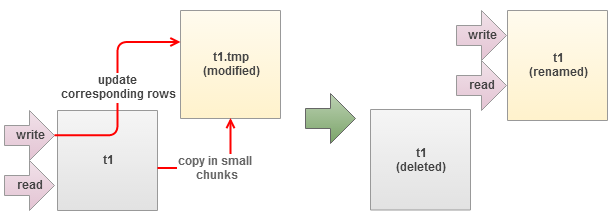

Percona has built a tool to overcome this problem with its Percona Toolkit bundle called pt-online-schema-change. It allows altering of tables without locking them, and works in the following manner:

- Creates a shadow copy of target table

- Installs triggers in source table to forward updates to target table

- Copies source table data in small chunks to target table

- Renames target table to replace the source table

However, the tool will not work if any triggers are already defined on the table. For more detailed explanation about the tool, please refer to Percona Toolkit documentation page.

Schema Changes in Galera

DDL statements (ALTER, CREATE, RENAME, TRUNCATE, DROP) are replicated in statement level. Galera has two inbuilt methods in handling these statements:

- Total Order Isolation (TOI)

- Rolling Schema Upgrade (RSU)

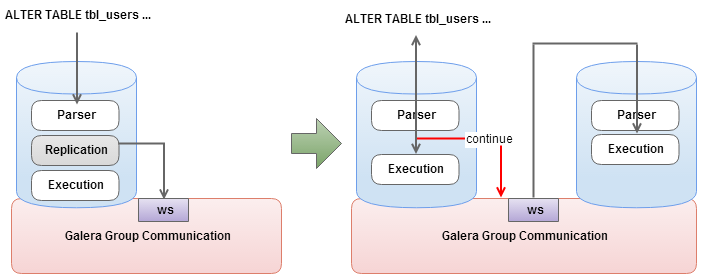

Total Order Isolation

This is the default DDL replication method. Master node (node that originates the writeset) detects DDL at parsing time and sends out a replication event for the SQL statement before even starting the DDL processing. The DDL replication happens in STATEMENT format and every node in the cluster will process the replicated DDL at the same “slot” in the cluster transaction stream (comply to Total Order).

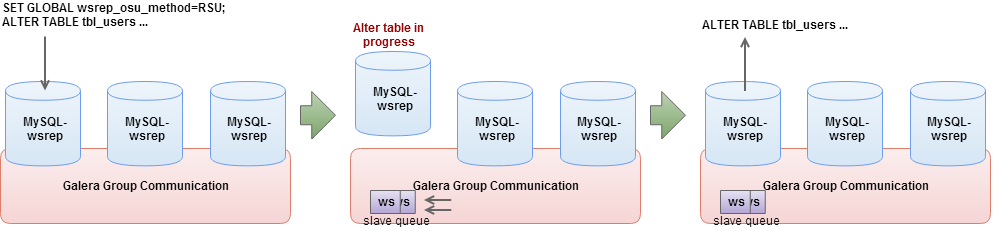

Rolling Schema Upgrade

This method will desynchronize the node from replication for the duration of corresponding DDL command. All incoming replications are buffered and nothing will be replicated out of the node. When the DDL processing is over, the node will automatically join back in cluster and catch up missed transactions from the buffer (gcache). Adjust the gcache size big enough to contain the pending writesets and to allow IST after the DDL is over. Once the node has rejoined and caught up with the rest of the cluster, repeat the process on the next node in the cluster.

Comparison

| Total Order Isolation (TOI) | Rolling Schema Upgrade (RSU) | |

| Advantages |

|

|

| Disadvantages |

|

|

| Use Cases |

|

|

Performing Schema Upgrade

With respect to how Galera handles DDL statements, we have two categories of methods:

Instant method:

- TOI only – Perform schema upgrade to all nodes in the cluster. This method will lock the table similar to how default schema changes happened in MySQL.

- TOI with pt-online-schema-changes – Perform schema upgrade to all nodes in the cluster without locking up the table.

Rolling method:

- RSU with wsrep_desync and wsrep_on – Perform schema upgrade on the local node and keep receiving replication events but not sending out replication events. This method is good for non-conflicting operations and it will not slow down the cluster. Even though the upgraded node replicates writesets, in case of conflicts, note that the writeset will be aborted.

- RSU with node dropping – Perform schema upgrade on the local node in isolation mode where the designated node is taken out of the cluster. The node will not receiving/sending any replication events. When DDL is over, the node will automatically join the cluster and catch up on missed transactions from the buffer (gcache) . Joining the node back must only happen through IST.

Performing an online upgrade requires backward schema compatibility, whereby your application should be able to use both old and new schema. MySQL guarantees ROW replication event compatibility with some limitations:

- Source and target can have different number of columns

- Columns must be in the same order

- New columns in the end, and must have default values

- Some data type conversions are also supported

Newer MySQL versions tolerate more variation between source and target tables as described in details at MySQL Documentation page.

Step-by-step – Performing Online Schema Upgrade in Galera using TOI and pt-online-schema-change

Assume we have a table called tbl_points with 1.2 million of rows. We will add one column called remarks in the end using TOI method with pt-online-schema-changes. Here’s our table definition:

CREATE TABLE `tbl_points` ( `id` int(11) NOT NULL AUTO_INCREMENT, `name` varchar(45) NOT NULL, `point` int(11) NOT NULL, `user_id` int(11) NOT NULL, PRIMARY KEY (`id`), KEY `fk_users_points_idx` (`user_id`), CONSTRAINT `fk_users_points` FOREIGN KEY (`user_id`) REFERENCES `tbl_users` (`id`) ON DELETE CASCADE ON UPDATE NO ACTION ) ENGINE=InnoDB AUTO_INCREMENT=3610262 DEFAULT CHARSET=latin1

1. Download and install Percona Toolkit on one of the DB node in primary component:

$ wget percona.com/get/percona-toolkit.rpm $ rpm -Uhv percona-toolkit-2.2.5-2.noarch.rpm

2. Make sure wsrep_OSU_method value is TOI:

mysql> SHOW VARIABLES LIKE '%wsrep_osu%'<span>; +------------------+-------+ | Variable_name | Value | +------------------+-------+ | wsrep_OSU_method | TOI | +------------------+-------+

3. Start the schema upgrade using pt-online-schema-change:

$ pt-online-schema-change -uroot -pMyR00tPassword --alter=”ADD COLUMN remarks VARCHAR(255)” D=mydb,T=tbl_points --execute Operation, tries, wait: copy_rows, 10, 0.25 create_triggers, 10, 1 drop_triggers, 10, 1 swap_tables, 10, 1 update_foreign_keys, 10, 1 Altering `mydb`.`tbl_points`... Creating new table... Created new table mydb._tbl_points_new OK. Altering new table... Altered `mydb`.`_tbl_points_new` OK. 2013-12-10T10:09:44 Creating triggers... 2013-12-10T10:09:44 Created triggers OK. 2013-12-10T10:09:44 Copying approximately 1203656 rows... ... Copying `mydb`.`tbl_points`: 97% 01:43 remain Copying `mydb`.`tbl_points`: 98% 01:14 remain Copying `mydb`.`tbl_points`: 98% 00:49 remain Copying `mydb`.`tbl_points`: 99% 00:24 remain 2013-12-10T11:15:00 Copied rows OK. 2013-12-10T11:15:00 Swapping tables... 2013-12-10T11:15:01 Swapped original and new tables OK. 2013-12-10T11:15:01 Dropping old table... 2013-12-10T11:15:01 Dropped old table `mydb`.`_tbl_points_old` OK. 2013-12-10T11:15:01 Dropping triggers... 2013-12-10T11:15:01 Dropped triggers OK. Successfully altered `mydb`.`tbl_points`.

The copy process is performed in small chunks of data, which are varied to attempt to make them execute in a specific amount of time. The tool also reports the remaining time for copying, which is especially helpful when estimating the operation time. At the same time, applications can still read/write to the old table while triggers transparently update the corresponding rows into the new table.

When the tool has finished copying data into the new table, it will rename the original and the new tables before finally dropping the original table. Take note that foreign key object name for this table has been changed slightly to avoid object name collisions in MySQL and InnoDB (fk_users_points → _fk_users_points).

In a next post, we will cover the steps on how to do schema changes using the RSU method.

References

- Percona Toolkit Documentation page – http://www.percona.com/doc/percona-toolkit/2.2/